There are a number of key business reasons you should know how much demand exists within an online niche.

The most common ones are:

- Assess your business’s current market share

- Identify opportunities for expansion

- Analyze investment potential

Calculating the total addressable market (TAM) offline is an age-old practice when it comes to assessing and deploying investment capital, but it’s always been a bit of a fickle process — data sources are mediocre at best, and you are almost always left approximating.

While a degree of approximation is still required when calculating online market size, we can at least use more quantitative data sources, like search data.

We are hired with increasing frequency to help companies determine their online TAM, almost exclusively for one of the 3 reasons I’ve mentioned above.

We tend to perform these analyses mostly for ecommerce companies, but we are starting to work with more and more enterprise software and SaaS companies to help them define both their keyword strategies and even product roadmaps informed by TAM data.

Attention: Before we get any further into the process, this section that you just read is massively important.

Everything you and your team does from here on must be goal-oriented. Whether it be for one of the reason listed above or any another you may have in mind, you will waste your time collecting data and sorting through thousands and thousands of keywords if you do not have a focused goal in mind.

Putting Keyword Data to Work

As with almost all quantitative approaches, the more data we have the better – we recommend collecting as much data as possible in the beginning stage because it will decrease the number of times you will need to filter.

We collect data from the following four sources:

1. AdWords/PLA

One of the best initial data sources to start with is historical AdWords, if you have it. This becomes even more powerful if you’re an ecommerce website and you have at least 12 months of historical PLA data.

The caveat to having AdWords data is we can match up clicks and conversions at the keyword level and infer which head terms and modifiers are driving the most conversions for our client.

2. Google Search Console

If you are not an ecommerce brand and PLA data is not available to you or your team, the next (or equally as important as historical Adwords data) data source is Google Search Console (GSC.) We use the Google Sheets plugin SuperMetrics to pull in the last 16 months of raw search query data from GSC.

This allows us to immediately grow the list of keywords and we can begin to see what users in that particular industry are looking for.

3. Competitors

We use Ahrefs to identify which sites have the largest keyword footprints, downloading all of their terms and then also pulling in all the data from their lists of top competing domains.

At this point, we’re only focused on expanding our total list of terms and not worried so much about all the additional keyword level data, we’ll get that later.

4. Keyword Tools

Lastly, we collect keywords from search tools (TermExplorer or KeywordKeg or WonderSearch) that scrape Google Suggest, Wikipedia and eBay.

Identifying Keyword Modifier Patterns

First, we need to sanitize this data so we can extract insights from it to use to expand our term list.

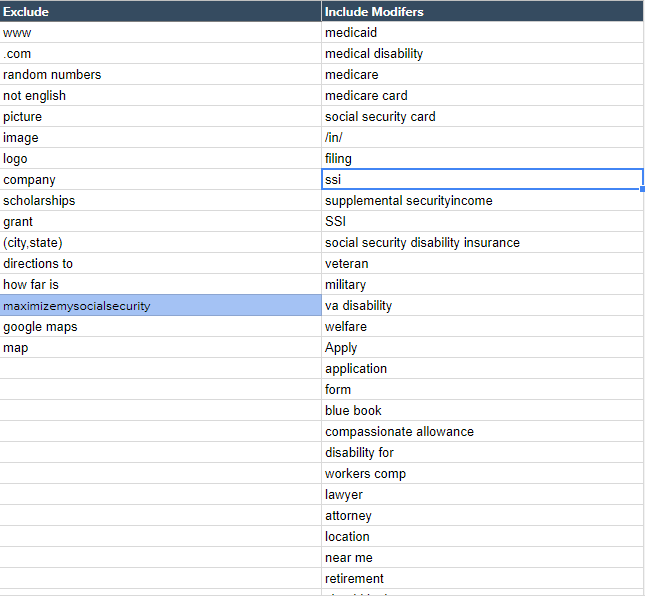

To do this, our SEO Analysts painstakingly review the term lists (often between 30,000 and 50,000 terms) looking for modifier patterns to then query against the list, and build new lists of included modifiers and excluded modifiers, as well as pulling out core head terms.

You’ll want to score each modifier as Included or Excluded in its own column, and then build this into the formula logic for aggregating all the data for the report.

For ecommerce sites these head terms are typically going to be your category and subcategory terms. We exclude terms that are branded because it will be extremely difficult to rank for and aren’t worth the effort.

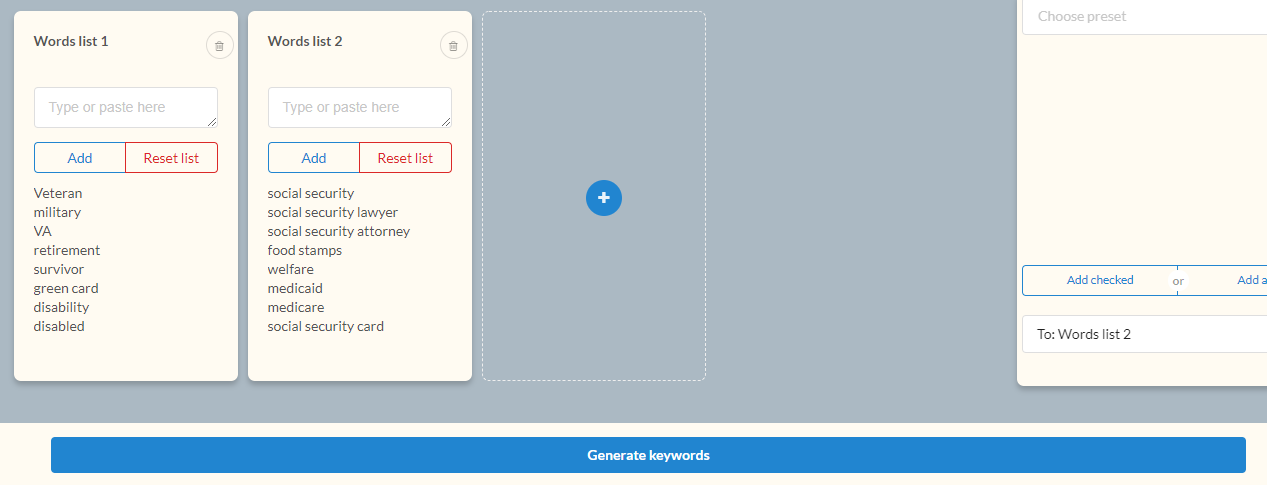

Here’s an example of what one of these lists looks like:

Next, we take the list of included modifiers and head terms and multiply it using a keyword multiplier tool, giving us a new base term list.

Expanding the List Using Modifier Cohorts

Now that we have identified patterns of terms that include topical keywords and intent or product focused modifiers, we can get to scraping.

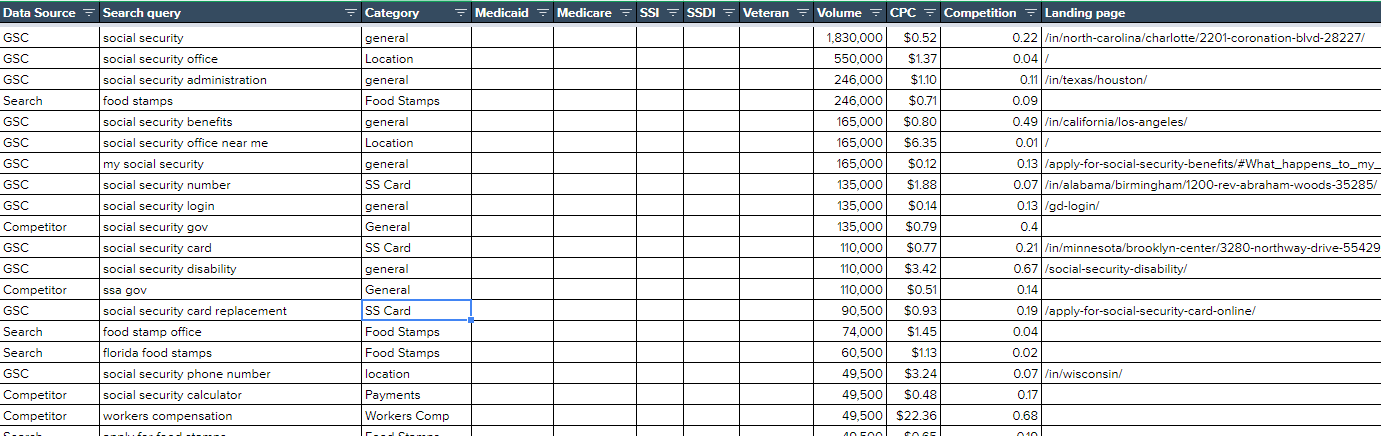

Additionally, it’s worth mentioning that keywords can also be grouped into cohorts using keyword-level metrics, such as highest traffic, lowest difficulty, lowest CPC, and so on – like this:

Once we’ve blown out our total list of terms to a place we feel is representative of the majority of the niche online, which involves pulling the keyword ranking data for the top 10-20 websites within each modifier cohort, we go to work expanding the list even more.

At this stage in the process we user Term Explorer to expand the list to include all related keywords across Wikipedia, Google Suggest, Amazon, and eBay.

Here is what the UI looks like inside of Term Explorer for our Social Security example once we inputted our entire list:

We can then export the data from Term Explorer (upwards of 200,000 keywords sometimes), add any newly found unique keywords to the list, and query the modifier lists against the new list. Any keywords that are not relevant can be removed from the data set.

Refining The List of Keywords

A lot of the time there are going to be terms in the list that inflate the total monthly search volume (MSV.) This is because search engines see terms that are similar in nature or include the same terms as having the same MSV, CPC, and Competition.

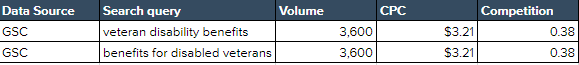

Here is an example:

Google groups ‘veteran disability benefits’ and ‘benefits for disabled veterans’ as the same keyword, so we must remove these or the MSV and total keyword count will be inflated.

This is most easily done using the AdWords API, but can be done also copying and pasting all your terms into Keyword Planner, which as a bonus will also sanitize your terms list by grouping synonyms that Google sees as so semantically related that it returns the same volume, CPC, and difficulty data for each.

Understanding Search Intent

Understanding what exactly a user wants out of their search is most certainly one, if not the, most important aspect of doing any sort of keyword research.

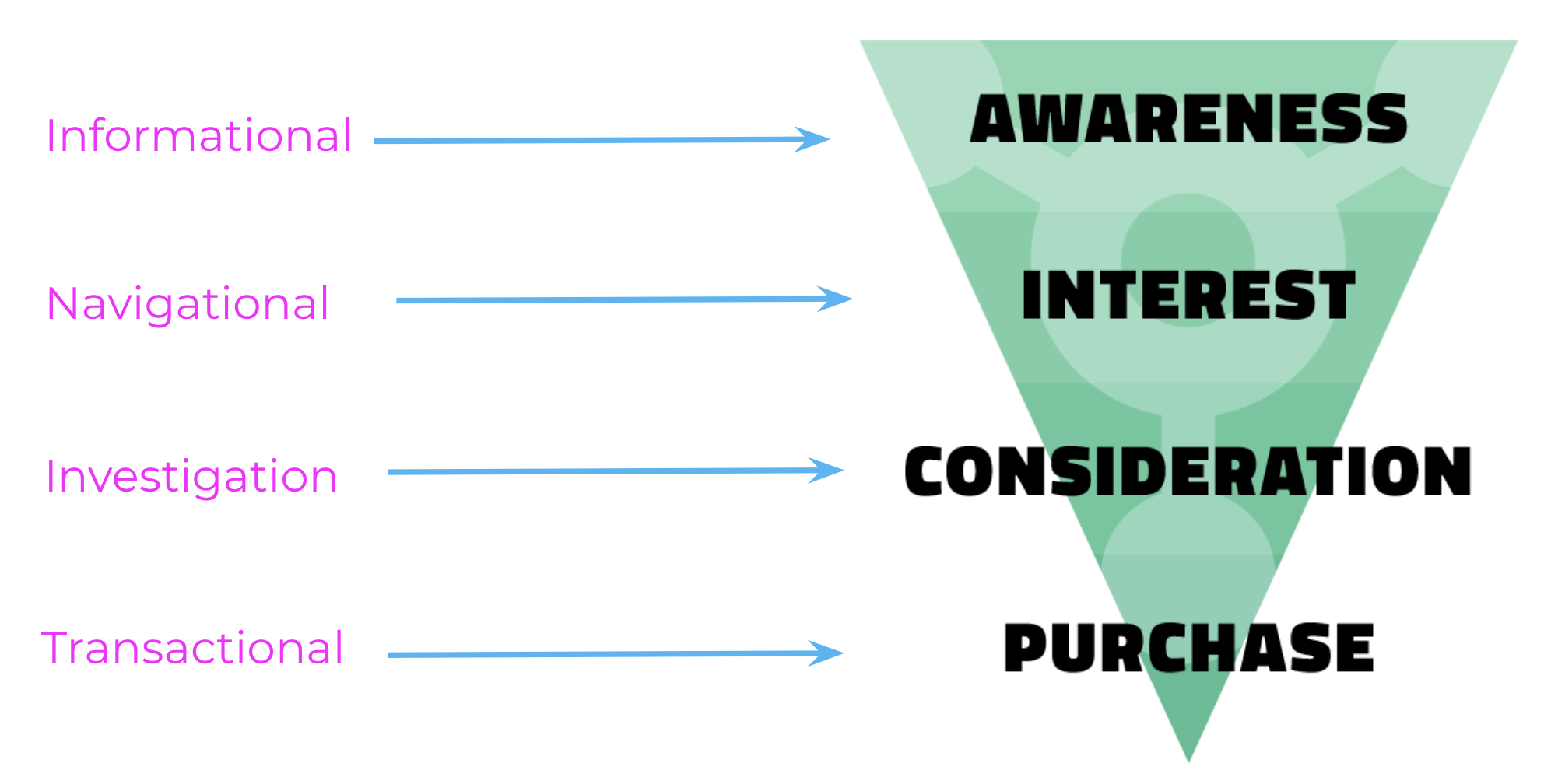

If your site or specific page that you are conducting keyword research for is targeting a term that is informational in nature but your actual intention is to target users in the commercial phase of the sales funnel, you are not going to see any results.

Check out our 21:22-minute long video about search intent here on our YouTube channel.

Here is the search intent funnel that we use during this process. Notice that it is directly related to the sales funnel:

Mapping Search Intent

Once you have a firm understanding of what search intent is, it’s time to put the funnel to use.

This process can often become quite a tedious but a great place to start is with the included modifier list that you created just a few steps ago.

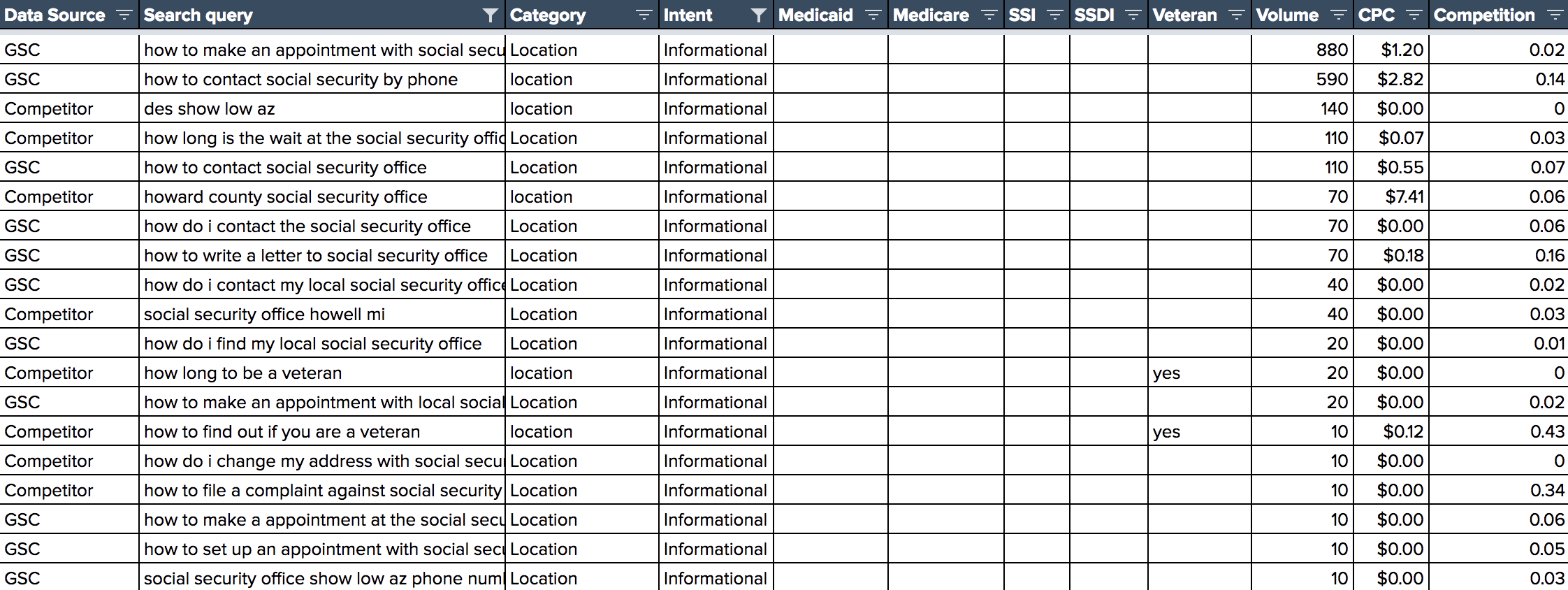

Here’s an example from the Social Security research our team put together:

Informational terms are perhaps the easiest to identify in a data set. You can start by using modifiers “how”, “why”, “when”, or “where” to begin the mapping process.

These are the terms that show that the user is not yet ready to apply, buy, or sign anything just yet but they are looking for the best resource in order to make that next step.

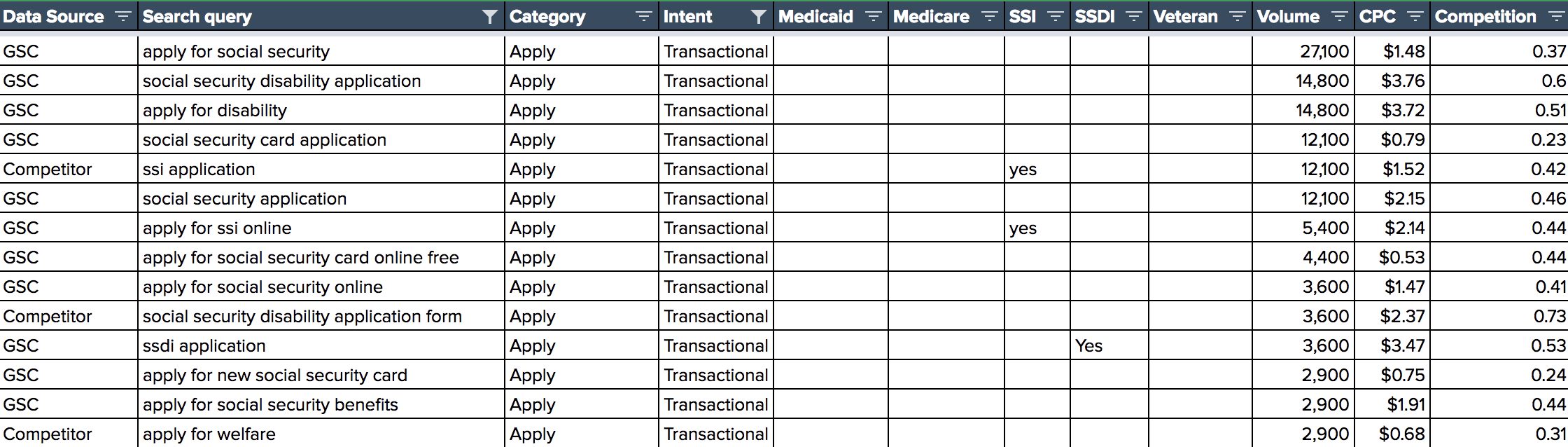

Once you are at the bottom of the funnel you are looking for terms that are transactional in nature. Users here are using modifiers like “apply” or “file” or “application” which shows they are looking for a place to fill out a form or application.

By the way, when we say “transactional” that doesn’t necessarily mean an exchange of anything of monetary value but instead whatever the intent of your site or page is.

Whether it be getting users to enter their email, request a demo, or fill out a contact form, there are transactional intent keywords for any business.

Creating a Priority List

This is the best part of the process by far. By this time you have collected data, went through it line by line, identified modifiers, expanded the list using modifier cohorts, and began to understand search intent for you target audience.

When deciding which terms to prioritize there are a few different things you can do:

- Sort the list by monthly search volume, then pick the top 100 terms that your site does not rank in the top 5 for.

- Add in a competitor layer of data so you can find the low hanging fruit that competitors are not optimized for or targeting.

- Filter for terms with commercial intent and then sort descending by current keyword position, to identify keywords that convert.

In Conclusion

[easy-tweet tweet=”Keyword research has evolved” user=”iftfagency” hashtags=”SEO,KeywordResearch,PHLSEO” template=”qlite”].To design holistic and comprehensive SEO strategies you need to be aware of the key metrics that exist within your online SEO landscape;

- The size of your market (both in terms of the number of keywords and the average search volume of those terms).

- Who your online competitors are (these offer vary significantly from your offline competitors).

- Your current market share, and areas where you have little to no visibility (which usually identifies areas where your product or service offering is either weak or doesn’t exist).

We have helped FTF clients identify literally hundreds of millions of dollars in additional SEO revenue opportunity using this data.

Put in the time, aggregate and organize all the data (we’ve given you the process), and identify those large growth opportunities.

A Special Thank You

This process has been refined significantly throughout 2018, and it’s thanks directly to the hard (and brilliant) work of 2 our FTF SEO Team Members: Matt DiMenno and Kurtis Nysmith. Follow them… they’re doing big things.

Questions? Criticisms? Ideas?

Please drop me a comment below. Thanks.